- How to post jupyter notebook online how to#

- How to post jupyter notebook online install#

- How to post jupyter notebook online code#

- How to post jupyter notebook online free#

- How to post jupyter notebook online windows#

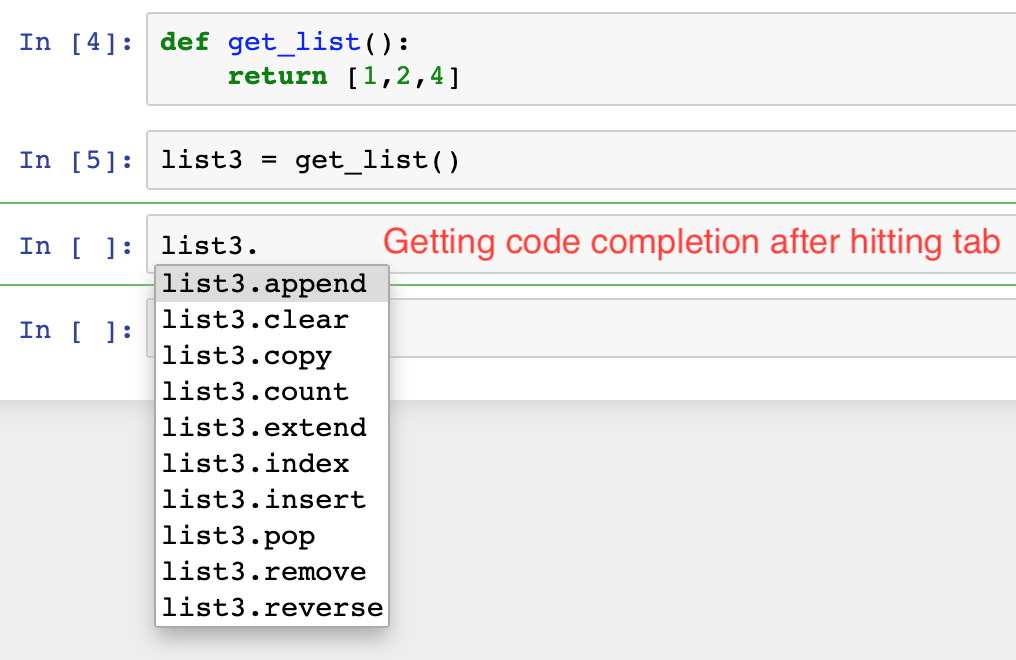

How to post jupyter notebook online code#

This tool was only released in May this year by Ted Petrou but it is a game-changer if you write your code in Jupyter Notebooks. The following tools help me to achieve my goal of publishing one to two articles per week around my other life commitments. Particularly those containing coded examples. Over time I have found some tools that have hugely sped up the time it takes for me to create and publish an article. When I first started writing I found that the process could be very time consuming, and it was difficult to maintain this schedule around a full-time day job. With technical articles you often also need to produce code to illustrate your explanations, ensure that it is accurate and transfer that code from the tool you used to write it, to your Medium post. Writing, in particular, technical writing can be time-consuming. Not only do you need to come up with an idea, write well, edit your articles for accuracy and flow, and proofread them. Java 8 works with UBUNTU 18.04 LTS/SPARK-2.3.1-BIN-HADOOP2.7, so we will go with that version.I’ve been writing about data science on Medium for just over two years. This is important there are more variants of Java than there are cereal brands in a modern American store. export PATH=$PATH:~/.local/binĬhoose a Java version.

How to post jupyter notebook online install#

pip3 install jupyterĪugment the PATH variable to launch Jupyter Notebook easily from anywhere. (Earlier Python versions will not work.) python3 -version Python 3.4+ is required for the latest version of PySpark, so make sure you have it installed before continuing.

How to post jupyter notebook online windows#

If you're using Windows, you can set up an Ubuntu distro on a Windows machine using Oracle Virtual Box. It is wise to get comfortable with a Linux command-line-based setup process for running and learning Spark. That's because in real life you will almost always run and use Spark on a cluster using a cloud service like AWS or Azure. This tutorial assumes you are using a Linux OS.

How to post jupyter notebook online how to#

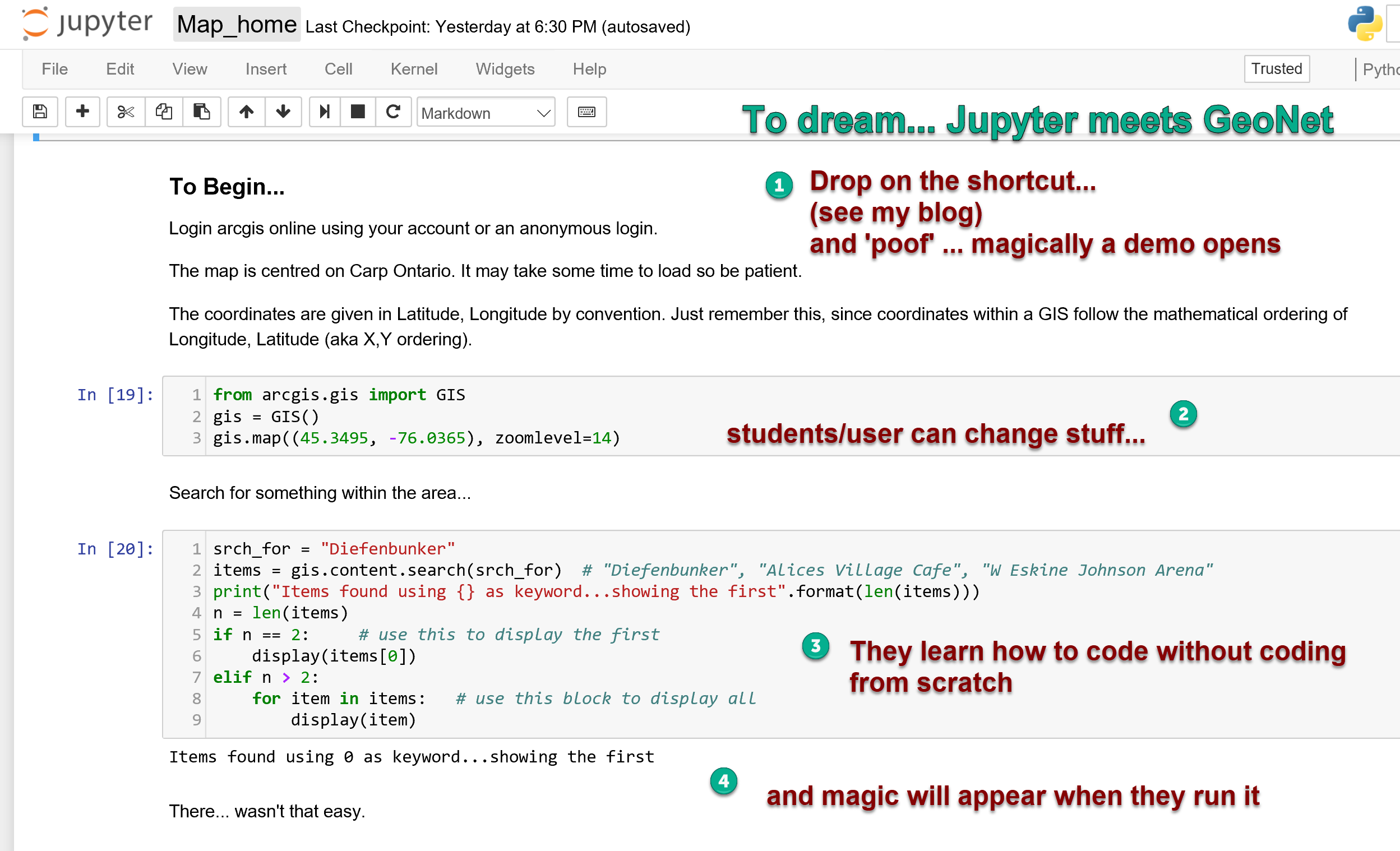

In this brief tutorial, I'll go over, step-by-step, how to set up PySpark and all its dependencies on your system and integrate it with Jupyter Notebook. However, the PySpark+Jupyter combo needs a little bit more love than other popular Python packages. Most users with a Python background take this workflow for granted. However, unlike most Python libraries, starting with PySpark is not as straightforward as pip install and import. It will be much easier to start working with real-life large clusters if you have internalized these concepts beforehand. You can also easily interface with SparkSQL and MLlib for database manipulation and machine learning.

You distribute (and replicate) your large dataset in small, fixed chunks over many nodes, then bring the compute engine close to them to make the whole operation parallelized, fault-tolerant, and scalable.īy working with PySpark and Jupyter Notebook, you can learn all these concepts without spending anything. Spark is also versatile enough to work with filesystems other than Hadoop, such as Amazon S3 or Databricks (DBFS).īut the idea is always the same.

This presents new concepts like nodes, lazy evaluation, and the transformation-action (or "map and reduce") paradigm of programming. Remember, Spark is not a new programming language you have to learn it is a framework working on top of HDFS. You could also run one on Amazon EC2 if you want more storage and memory.

However, if you are proficient in Python/Jupyter and machine learning tasks, it makes perfect sense to start by spinning up a single cluster on your local machine.

How to post jupyter notebook online free#

These options cost money-even to start learning (for example, Amazon EMR is not included in the one-year Free Tier program, unlike EC2 or S3 instances). Databricks cluster (paid version the free community version is rather limited in storage and clustering options).Amazon Elastic MapReduce (EMR) cluster with S3 storage.The promise of a big data framework like Spark is realized only when it runs on a cluster with a large number of nodes. Unfortunately, to learn and practice that, you have to spend money. Running Kubernetes on your Raspberry Pi.A practical guide to home automation using open source tools.6 open source tools for staying organized.An introduction to programming with Bash.A guide to building a video game with Python.